Windows Azure : An Introduction

At last year’s PDC Microsoft released the details of its new venture into the next IT paradigm that is arguably set to change the way that applications are developed, hosted, managed and funded - Cloud Computing. It is easy to dismiss Cloud Computing is a fad or simply as a move back towards the mainframe days of a central processing model, but regardless of these debates there is no doubt Microsoft, Amazon and Google are pouring large amounts of funding into developing Cloud Computing platforms. I’m not going to debate the subject of Cloud Computing, although I will state that personally I feel it will impact all that we do in IT in the future, perhaps not in it’s current guise, but this latest move from Microsoft can be seen as one more step on that journey.

What’s is Windows Azure?

Well it’s not an image of Windows Server hosted somewhere on the internet for you to remote desktop into and install what you like on it. To quote Microsoft it is (in Marketing speak) a “Platform for writing highly scalable and available applications”.

It’s not currently possible nor advisable to just convert your current application to run on Azure, instead Azure provides a platform on which you can build a new application that is highly scalable and available. Azure runs in Microsoft Data Centres (currently in the US but planned to be located throughout the world) and your application runs within individual instances of virtual machines on that Azure fabric.

The pricing policy is also going to be based on usage which allows you to start small (with a few computing instances) and then increase the number of instances (and therefore computing power) as your application grows and needs to be scaled for the increasing number of users. Imagine you’re writing the new “Facebook”. You could buy a handful of expensive servers and then buy more if/when the applications user base takes off. Then you need to buy more and more until you’ve got a whole DataCenter of servers (all consuming masses of power) and a team of IT Administrators running them. Then your user base levels out, and possibly drops down to a more stable level leaving you with excess capacity you’ve already paid for. Worse still if your application never takes off then that initial investment in the first few servers will leave you seriously out of pocket. In contrast cloud services like Windows Azure are paid by usage. The cost per month will be related to your current storage usage and your compute instance usage. If you need more resources to scale out your application then you just pay more, which allows you to adjust your costs based on demand and removes the need for large upfront capital expenditure.

These cost benefits are ideal for Web 2.0 start-ups but they can also benefit large Enterprises. The ability to develop an application within a low cost framework that also manages hosting that application and usage monitoring, allows any development team to try out new ideas and dynamically move with the business. Cloud Computing could be a tool to enable an Enterprise to keep up with fast moving business opportunities at a low initial outlay and a low Total Cost of Ownership. An alternative model is where a platform like Windows Azure is deployed locally in the Enterprise DataCenter. The enterprise would then benefit from an efficient processing model for its data centre, forcing all new applications to be built to run on that platform. This would provide most of the benefits of Cloud Computing but with less issues around security as data would not be leaving the Enterprise. Microsoft have so far only unofficially acknowledged this model and are not promoting it as an option with Windows Azure, although it will be interesting to see if they do promote this idea in the future.

Azure Services Platform

This is the stack that makes up Microsoft’s current Cloud Computing offering:

As you can see there are several offerings that sit on top of Azure, so lets quickly look at these first, although we’ll not go into the detail for these:

Microsoft .NET Services Offers distributed infrastructure services to support both cloud-based and local based applications. This offering includes:

Access Control Provides claims based implementation of identity federation and transformation in the cloud.

Service Bus Allows you to expose your services (in the cloud or on premises) on the internet via a URI, without having to open up incoming ports inside your firewall.

Workflow Running Windows Workflow based workflows in the Cloud.

Microsoft SQL Services This provides “SQL like” data services in the cloud based on SQL Server. This is effectively a premium storage service over the standard one provided by Azure Storage Services.

Live Services There is a wealth of data locked within Microsoft Live applications (e.g. Live Mail) that is difficult to interact with. Live Services allows your applications to interact with this data. Building on Live Mesh it also enables synchronizing this data across a user’s numerous devices.

Windows Azure

This is base environment where your application will sit. It is not an Operating System but that is the ideal way to imagine it. In the way an OS provides an abstraction from the systems hardware and provides APIs to enable communicate with it, Windows Azure is an OS in the cloud. It sits on the virtual hardware and provides an environment (a fabric) for running your applications.

The deployment and management of your application instances is transparent to the developer but its useful to understand how Azure works under the covers. On deploying your application to the Cloud it is added to a Virtual Hard Disk which is then added to a Virtual Machine instance running on a Windows 2008 (Server Core) host server in a Microsoft Data Centre. Interestingly , a multi-cast message is sent to all available hosts, allowing multiple instances to be installed concurrently. The virtual machine running your application instance will share it’s host machine with other applications. Your instance may move around different host machines as required to maintain availability and server maintenance. Microsoft’s deployment strategy takes into account both Fault and Update Domains, ensuring that your instances are not all deployed on a single point of failure (e.g. on a single power point etc). For the current CTP release the hardware of the VM is: 64-bit Windows Server 2008, 1.5-1.7 GHz CPU, 1.7 GB RAM. It is expected that the commercial release will allow for a choice of specifications. It’s worth noting that each Azure instance currently only see’s one CPU and so multi-threading should be used within your code for non-CPU intensive tasks.

Your application instances can perform one of two roles, Web or Worker:

A “Web Role” runs within IIS 7 and therefore effectively runs as an ASP.net web application. This means that most types of application that can be run under IIS can be run in a Web role, so for example ASP.net websites and WCF Service Applications. A web role allows inbound connections over HTTP and is used where inbound connections from the outside world are required.

A “Worker Role” is similar to a Windows Service except that it runs in the Cloud. It cannot accept inbound communications, but it can make outbound communications. It is a .Net Class Library that has a Start() method which is run at start-up and it’s up to your code to keep itself alive (using sleeps and loops). Communication between roles/instances is via ‘Queues’ (more on these below). These instances are ideal for providing background processing of data which allows a faster response from your Web Roles if the web roles are used to off-loading the intensive work onto these Worker Roles.

It is expected that more roles will emerge with the commercial release of the Azure platform.

Windows Azure Storage Services

Windows Azure currently provides four forms of Storage , ‘Local’, ‘Queues’, ‘Tables’ and ‘Blobs’. It is important to note that SQL Data Services is a separate service that is not part of Azure Storage Service but instead an additional add-on service that provides a more SQL like data framework. Interestingly all these storage services are actually independent and fully accessible over HTTP(S) (via a RESTful interface) from both within and outside of the cloud. This means that your local windows client application could store it’s data in the cloud even though the application is not hosted in the cloud. Alternatively you could save the data from your Cloud application in Azure Storage instances but then access it from your local on premise application. All data writes to the storage services (this doesn’t include local storage) are triplicated for data redundancy across multiple servers.

Local Storage

This is not part of Windows Azure Storage Services but should be included in the storage discussion for completeness and to avoid confusion. Each Azure instance runs on a Virtual Hard Disk (as previously discussed) and this provides around 250GB of local transient disk space for temporary storage. As this data is transient and local only to that one instance of your application you can’t use this space for true data persistence, but it is useful where you need to temporarily store data to disk during your processing.

Queues

Queues primarily allow communication to occur between instances and allow Worker Roles to be passed work from Web Roles. For example your web role may accept incoming data which it then persists to a queue for a worker role to pick up. The worker role constantly polls the queue for work to process. The queues are based on FIFO and as queues are persisted to disk (and triplicated) they are very durable, and this provides system designers with a powerful feature that can be used to provide transaction type durability into their Azure applications that are not supported at the data layer. Read messages remain in the queue but marked as hidden preventing them from being picked up by another instance, it is up to the application to explicitly delete the message once it has been actioned. If it is not deleted then it will become visible again on the queue after a specific period (less than a minute). This means that once the data is on the queue the application can fail and once it is running again it can pick up the last message and continue as the message was not deleted. Adding queues therefore into your application design allows you to design durability into the architecture.

BLOB Storage

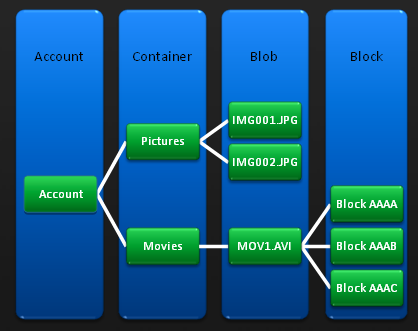

Blob storage provides a simple method of storing and retrieving BLOBS (Binary Large Objects). This is particularly useful for media content but can also be useful for persisting serialised objects. Blob storage works as a hierarchy in a similar approach to a file system. You define an ‘account’, which contains ‘Containers’ which hold the Blobs. These blobs can also be held as ‘Blocks’ which allows for the handling of large Blobs. This relationship is shown in this diagram:

Remember that the Storage Services are separate from your Azure application and can be accessed independently. The hierarchical relationship described above is key to the RESTful URL used to retrieve this data. The URL looks like this:

http://<Account>.blob.core.windows.net/<Container>/<BlobName>

This provides a very user friendly URL that is easy to navigate and allows the designer to use the hierarchy to his/her advantage to make the data structures within the application as simple as possible.

Tables

When we think of storage we tend to think of Relational Databases and Table based schemas. The Table storage service provides a mechanism to store data in hierarchical tables but these are NOT relational tables. This is seems to be a sticking point for many people who can’t see the benefits of having a table structure that isn’t relational. RDMS systems have been around for so long now that the relational model is taken for granted as the best for all situations. The truth is of course that it depends on what sort of system you are trying to build. The view that Microsoft have taken is that Azure is a “platform for highly scalable and available applications” where the RDMS model doesn’t always fit. Instead of a centralised, normalised data structure that minimises disk space and duplication, and provides complex query services, why not use the power of distribution and the cheap cost of disk storage to provide a fast, scalable and reliable DMS that effectively duplicates data.

Table Storage does not provide referential integrity, joins, group by, transactions and complex queries. If you determine that you really need a relational model for your application then you will need to consider the SQL Data Services offering (as mentioned briefly at the start of this article) and pay the premium. Table Storage, however, does provide cheap, scalable and durable data management with no fixed data schema. The idea is that you de-normalise your data and store it as required by the application, using multiple inserts and just simple queries. The data is not held as physical tables but merely as ‘entities’ with properties (like fields or columns). Each entity has a partition key and a row key which together provide uniqueness. Currently only the row key is indexed so the data should be partitioned for scalability. The CTP version requires some creative uses of Row Keys and Partitions to produce the desired effect but it can truly scale.

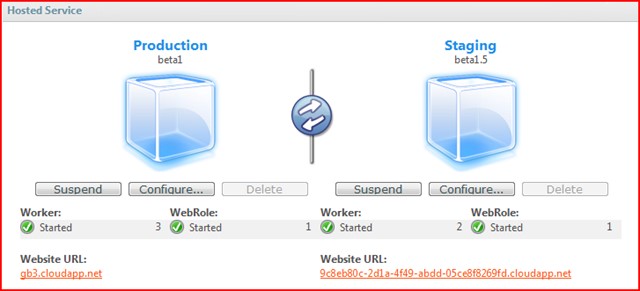

Development Lifecycle/Tools

Azure applications for the CTP need to to be written in native code (.Net), although the commercial version is expected to support non-managed code. After installing the Windows Azure SDK you are provided with locally installed mock versions of the Windows Azure fabric (to run your instances in) and Azure Storage Services. These allow you to run and debug your cloud application on your developer machine without an Azure account or internet access. Once you have completed your application you ‘publish’ it using Visual Studio. This runs it through CSPack.exe which basically gathers the assemblies and related config and compresses them into a package. The developer then logs into the online “Azure Service Developer Portal” and uploads the ‘package’ to a staging area in the cloud. This staging area can be publicly accessed but it is separate from your live application instances . This allows you to test the application privately in the cloud environment and then promote it to live once testing is complete. The promotion process ensures that there is no downtime of your cloud application during the switch to the new version.

The Portal provides key information on your Windows Azure accounts and allows you to extract the logs for your applications and to view detailed reports on various metrics such as Network Usage, Storage, Virtual Machine hours etc.

Developing For Windows Azure

In order to develop an Azure application you must install the Windows Azure SDK and the Windows Azure Tools For Visual Studio. One point to note is that the SDK utilises IIS7 and therefore requires Windows Vista on the developer machine. You also need SQL Server Express 2005 for the Storage services to utilise. If you want to actually host your finished application in the Cloud then you need to request a token from the Microsoft Azure web site. These are free for the CTP version but there is a waiting list so register early.

You can find the new Azure project templates from the Visual Studio ‘New Project’ dialog which allows to create a basic Azure configured application as a starting point. The result is a Solution with some Azure specific items and a ASP.net Project for the Web Role or a Class Library Project for the Worker Role (depending on what options you picked).

The Azure API provides you with access to the RoleManager class through which you can utilise Logging and Configuration utility classes. As you cannot debug your application once its in the cloud it is important to add instrumentation to monitor the progress of your application and to report exceptions. This log output can be viewed in the local Development Fabric for development purposes but once in the cloud it is automatically written to storage services from where you can download it. Logging is mostly a matter of calling RoleManager.WriteToLog().

Two key files in the solution are the Service Configuration files which define your Azure application and the services it consumes. These files effectively define your application and inform the Azure fabric how to handle your application, for example how many instances of web roles should be deployed for your application, and what Storage Accounts to use. By changing the instance value from 1 to 5 you suddenly have 5 instances of your application running with the scalability that this provides. Whilst you can still use web.config for configuration values these should be limited to those that don’t need to change at runtime as changes to this will require you to re-package and re-deploy your application. For runtime dynamic configuration use the ServiceConfiguration files.

For consuming the Storage Services from your Cloud application it is recommended that you use the ‘Storage Client’ project that is provided in the Samples section of the SDK. This project provides a abstraction from the REST API. This abstraction is recommended to enable a fast start-up time on your new project but also it is expected that this API will change as the platform matures and the ‘Storage Client’ project will shield these changes. There’s no point learning something that is going to change.

This is just a very quick overview, for more information I recommend you download the SDK and then run through the hands on labs in the Azure Services Training Kit.

Conclusion

Windows Azure is not the definitive answer from Microsoft for what a Cloud Computing platform should look like. It is merely a very early CTP release of their future platform. It is merely a step on the way to the next computing paradigm, whatever that may eventually look like. This is a big step though, as the functionality provided is enough to get a very capable system up and running and hosted entirely in the cloud. It does this by building on the development tools we already know (Visual Studio and managed code) but it also requires, in some areas, a shift in thinking away from more traditional approaches of software design we’ve been using for several years.

References

Microsoft Windows Azure Site:

http://www.microsoft.com/azure/default.mspx

Windows Azure SDK:

http://www.microsoft.com/downloads/details.aspx?familyid=B44C10E8-425C-417F-AF10-3D2839A5A362&displaylang=en

Windows Azure Services Training Kit:

http://www.microsoft.com/downloads/details.aspx?FamilyID=413e88f8-5966-4a83-b309-53b7b77edf78&displaylang=en

Windows Azure Tools For Visual Studio:

http://www.microsoft.com/downloads/details.aspx?familyid=59E8FC0C-C399-4AB7-8A93-882D8E74B67A&displaylang=en

Azure Services Platform Developer Center:

http://msdn.microsoft.com/en-us/azure/default.aspx

Deploying a Service on Windows Azure:

http://msdn.microsoft.com/en-us/library/dd203057.aspx